Data Quality Implementation for Enterprise Data Warehouse

Data quality implementation is important in the context of Data Warehouse and Business Intelligence. This blog focuses on why this is important and how it can be implemented.

Importance of integrating quality data to Enterprise Data Warehouse

A Data Warehouse is an integral part of those enterprises which want to have clear business insights from customer and operational data. It has been observed that fundamental problems arise in populating a warehouse with quality data from various multiple sources systems. Let us see in greater detail.

1. Impact of Erroneous data being integrated to DW

Many organizations grapple with poor data quality which ultimately results in poor decision-making. After all, decisions are no better than the data on which they are based. Reliable, relevant, and complete data supports organizational efficiency and is a cornerstone of sound decision-making Poor quality data could be an obvious reason for the malfunctioning and operational inefficiency of an organization. The negative implications of poor data can be felt in terms of decreased customer satisfaction and trust, high running costs, poor business decision and performance

Download Whitepaper

2. Cost of Integrating poor quality Data to DW

Any kind of anomalies and impurities in data such as data incompleteness, incorrectness, Integrity violation etc. could avert its effective utilization, disabling high performance, hamper accurate processing of the results etc. Conclusions gained by data analysis could lead to faulty decisions once the data warehouse is polluted with bad data.

Let us see few facts given below

- 75% of all the data integration projects has been reported to have either over-run their budgets or have met a complete failure

- 70% organizations have identified costs stemming from dirty data

- 33% of organizations have delayed or canceled new IT systems because of poor data

- Business intelligence (BI) projects often fail due to dirty data, so it is imperative that BI-based business decisions are based on clean data

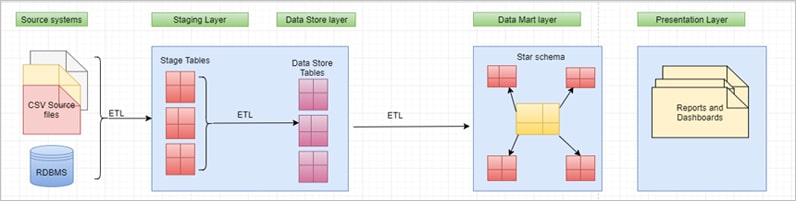

DWBI Architecture without Data quality implemented

Data from source is extracted as it is and loaded into stage tables (Landing layer) without any transformation using ETL. Post which data from Stage tables will be extracted and loaded to Data Store layer (Persistent layer). As a final process, data from DS layer will be extracted, transformed and loaded into Data Marts/DW.

The problem with this approach is, there are no Data quality checks implemented in the source data and because of which Data Warehouse might get populated with poor quality data which could lead into wrong analysis and faulty decisions.

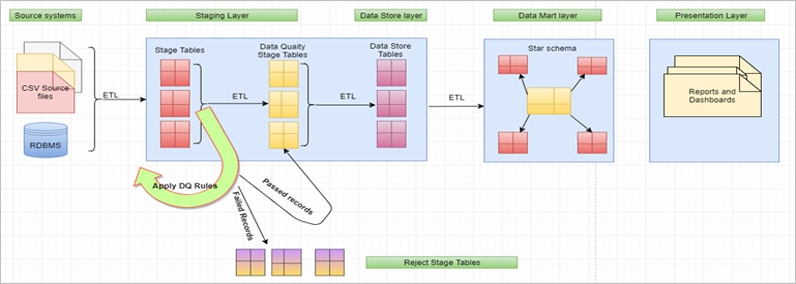

DWBI Architecture with Data quality implemented

Once the data is loaded into stage tables, ETL will trigger Data quality Implementation check process on the stage table data. For each stage table, ETL picks up the DQ Rules (Query) defined in the DQ meta data table and executes to identify which all are the records violating the rules. Accordingly, this process will mark/update those bad quality records with the respective DQ rule IDs. Single record can be failed due to multiple rules and those DQ rule IDs will be updated against those records.

Once the DQ process is completed, there is another ETL/Process that picks up all the bad quality records from Stage table and moves to Reject tables. These records can be identified by applying a filter “.DQ_Rule_ID is NOT NULL”. Same way another ETL/process that picks up good Data Quality Implementation from Stage to Data Quality stage and from there to Data Store and DW/Data Marts.

As an illustration of implementation, following section details how this can be achieved using Pentaho Data Integration (PDI).

© GrayMatter Software Services Pvt Ltd 2006 -2019